Six months ago, under tremendous public pressure, YouTube announced that it would tweak its algorithm to recommend fewer videos “that could misinform users in harmful ways.” It was a major step for a company that has spent years driving people toward increasingly sensationalist content — including dangerous disinformation — that would keep viewers glued to their screens for as long as possible to maximise advertising revenue.

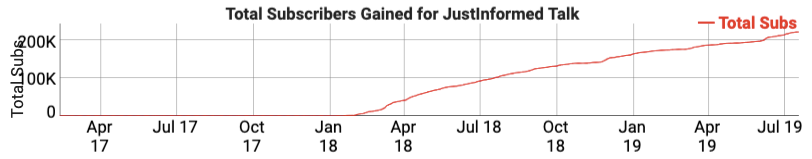

The announcement in late January triggered panic within YouTube’s sprawling network of conspiracy theorists. The host of JustInformed Talk, a channel with nearly a quarter-million subscribers that spreads QAnon theories and baseless claims about Democratic politicians, warned in a February video that the new measure was sure to “instantly destroy” the ability of pages like his to get traffic.

He was wrong. The audience for YouTube’s top conspiracy theory channels is still growing, a HuffPost investigation has found.

Using web analytics tools such as Social Blade and in consultation with experts, we analysed more than a dozen major YouTube channels that produce conspiracy theory videos to see how their subscriber counts, viewership and estimated earnings have changed. Some channels are growing at slower rates than before, others at around the same rates or a bit more rapidly. Changes to video production levels can affect growth, but as these YouTube channels’ enormous subscriber bases continue to consume and share their videos, all are still drawing in new viewers — and the creators behind them remain undeterred.

“The harm that’s been done in many cases can’t now be undone.”

- Guillaume Chaslot, former algorithm engineer at Google

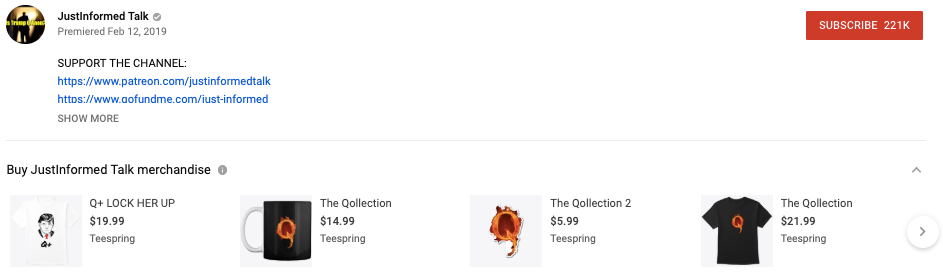

There are significant financial incentives for conspiracy theorists to keep churning out clickbait disinformation on YouTube: They can still promote their merchandise and third-party fundraising pages on their videos, and they can still take a cut of the earnings from ads on their content through YouTube’s monetisation program. The payoff can be huge.

Views from video recommendations, which can be especially vital for new YouTube pages trying to develop audiences, have been cut in half for content featuring harmful misinformation, a YouTube spokesperson told HuffPost. But for massive conspiracy theory channels like JustInformed Talk — channels that YouTube’s algorithm has already catapulted into notoriety, giving them large and loyal followings — the change has been largely ineffective in suppressing their influence.

Since uploading the video forecasting its own demise, JustInformed Talk has gained almost 50,000 new subscribers for a total of more than 222,000. It’s still verified on YouTube, and its average daily views per month nearly doubled between the end of January and the start of June (the most recent data available for that metric). The channel, which circulates falsehoods including the existence of a deep-state cabal of elite liberal and Hollywood pedophiles, has also seen its earnings increase, for an annual income of up to £111,500, Social Blade estimates.

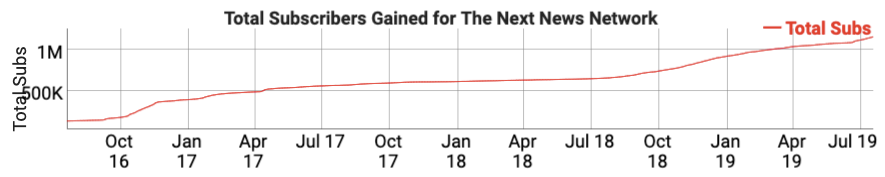

Next News Network, a rightwing conspiracy theory channel designed to appear like a legitimate news source, has close to 1.2 million subscribers and uploads multiple videos per day, including a stream of fake news. Also verified on YouTube, it has posted videos falsely claiming that Carmen Yulín Cruz, the mayor of disaster-torn San Juan, Puerto Rico, was arrested for corruption involving relief funds; that House Speaker Nancy Pelosi (D-Calif.) made a speech while drunk; that former president Bill Clinton raped a teenager; that the Clinton Foundation was linked to the murder of a Canadian couple; and that the Centers for Disease Control and Prevention urged people against getting the flu shot.

The channel is run by prominent conspiracy theorist Gary Franchi, who regularly accuses YouTube of targeting him as part of a broader campaign of partisan censorship — a narrative often pushed by far-right extremists trying to paint themselves as victims of big tech. Franchi and other conspiracy theorists have actually capitalised on YouTube’s change to its algorithm by using it as fodder to rally their bases for increased grassroots promotion.

“Conservative censorship is on the rise, and we cannot let algorithms dictate your requests of news and opinion on this channel that we produce for you,” he said at the start of a recent video, as he always does before imploring viewers to share and subscribe. “Now it’s up to you to help us reach new people. Please tell a friend about Next News. When you’re at the dinner table, bring up our reports, say how much you love Next News and Gary Franchi.”

In another video last week, Franchi, who did not respond to HuffPost’s request for an interview, described his audience as “the lifeblood of our channel,” and said that “because YouTube has removed our ability to reach new viewers, we now rely 100 percent on you to grow.”

Within an hour of being uploaded, the video had been viewed some 30,000 times.

Franchi’s videos still bring in an average of up to £1,800 daily for a rising annual income of as much as £712,600. And despite his repeated claim that YouTube has “removed our ability to reach new viewers,” his subscriber count is still steadily increasing.

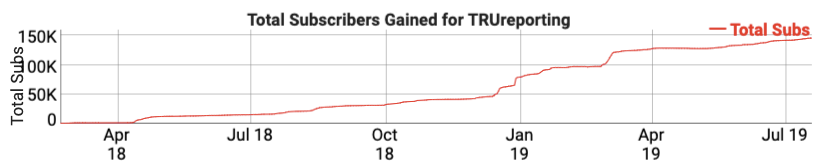

TRUreporting, another YouTube-verified conspiracy theory channel, earns up to £61,000 annually from ad revenue on the platform, in addition to direct donations from users. (YouTube’s “Super Chat” feature allows people to pay to have their comments highlighted and pinned during livestream chats.)

TRUreporting often livestreams multiple times per week.

The channel’s total subscriber count is also still rising — meaning the QAnon theories and other partisan lies it broadcasts for monetised views are engaging more and more people on the platform.

YouTube acted “way too late,” said former Google engineer Guillaume Chaslot, who helped design YouTube’s algorithm. “The harm that’s been done in many cases can’t now be undone.”

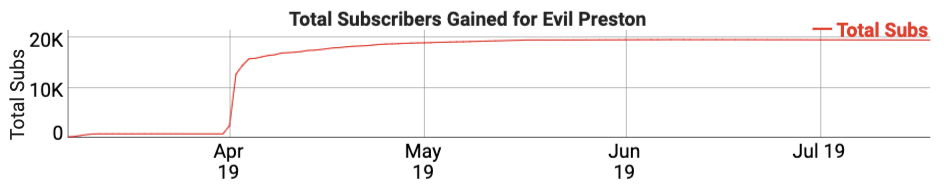

It’s not just established conspiracy theory channels that have managed to thrive in spite of YouTube’s attempt to contain them. More than two months after the company said it would crack down on disinformation, the minuscule channel Evil Preston posted a single conspiracy theory video about the death of American rapper Nipsey Hussle that instantly went viral. It drew in 3.1 million views and launched the page’s subscriber numbers from less than 800 to more than 12,000 in just two days. Evil Preston has since levelled out at around 20,000 subscribers and continues to spread fake news about Hussle and others to its new base.

YouTube’s effort to rein in the spread of disinformation on its platform is a work in progress that comes as “part of our ongoing efforts to improve the user experience across our site,” the company spokesperson told HuffPost, stressing that this content represents a tiny fraction of all videos on YouTube. “We are continuing to apply this change gradually in the U.S. and as we improve our recommendation systems and increase their accuracy over time, we will be rolling it out to more countries.”

In March, amid ongoing calls to demonetise or ban conspiracy theory videos altogether, YouTube rolled out a measure aimed at debunking them instead: It started adding links to related Wikipedia pages next to YouTube videos spreading false information.

The links, which are intended to provide users with contextual information so they can draw their own conclusions, are “as helpful as putting warning labels on cigarettes,” said Chaslot. “It’s better than nothing, but I’m not sure that it’s actually effective.”

For videos contending that the earth is flat or the moon landing never happened, viewers may be directed to Wikipedia pages that explain the opposite is true. But for other, more dangerous videos, such as those promoting Frazzledrip theory, Wikipedia offers no solution.

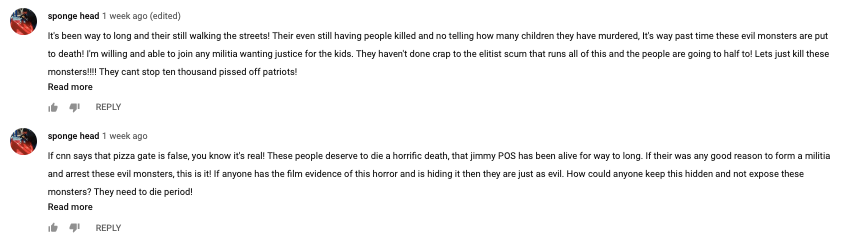

There’s no page dedicated to explaining that former Democratic presidential nominee Hillary Clinton and her top aide Huma Abedin did not, in fact, rape a little girl, cut off her face and wear it as their own, as the viral theory suggests. It’s absurd, but on YouTube’s many Frazzledrip videos, people have left comments demanding to know why the mainstream media has ignored the story and calling for Clinton and Abedin to be brutally killed. One such video has nearly 1 million views.

Letting conspiracy theories run wild online has led to offline violence in the past: In 2016, a man fired a rifle inside a Washington pizzeria after watching a YouTube video that falsely claimed the eatery was the headquarters for a child sex-trafficking ring. And just last year, another man who embraced anti-Semitic conspiracy theories killed 11 worshippers in a Pittsburgh synagogue.

The spread of disinformation on social media platforms can also cause “increased distrust in democratic institutions and a threat to productive democratic political processes,” said Becca Lewis, an affiliate researcher at Data & Society. YouTube serves as an increasingly popular alternative to traditional news sources, she noted, and in recent years, it has created an atmosphere where fringe content excels and is rewarded even without algorithmic support.

“If a creator has already started to grow an audience, that audience will seek out their content whether it’s going up in the algorithm or not,” Lewis said. “In many cases, this change [to YouTube’s algorithm] is too little, too late.”

K. Sophie Will contributed reporting.